Graphic created with Nano Banana, Gemini Flash 2.5.

One of the most important things to understand about AI tools is that the current ecosystem is still new, with a lot of new entrants with similar capabilities. And while there are new tools to use every day for everything, many of these tools are simply wrapped around a foundational program like ChatGPT, with prompts programmed in. There is a lot of duplication, so it pays to ask yourself: “Can I do this myself with a platform like ChatGPT or should I use this tool?

Less competition in the avatar space: HeyGen and Synthesia

One area where there is less competition, but still a lot of confusion is using avatars and AI or cloned voices to create videos—mainly for training in the enterprise setting, and for lots of other things outside of business.

The two main avatar programs are HeyGen (www.heygen.com) and Synthesia (www.synthesia.io).

According to All About AI’s 2024–2025 analysis, 72% of businesses and others using AI avatars report higher training ROI.

Translation capabilities

After doing a deep dive with All About AI, I realized that although HeyGen only has 40 languages versus 120 languages for Synthesia, I will probably use only 2 or 3 languages, mainly English and Spanish, for training

While a large corporation making training videos might care about having 120 languages, it’s not a concern for smaller businesses or individual trainers and teachers.

And while it’s true that avatar training works really well in the corporate setting, it is also an excellent platform for learning all kinds of things, like crocheting, reading tarot cards, cooking root vegetable soup, or getting help with how you hold your mouth when trying to pronounce new words in French.

Pixelation Matters

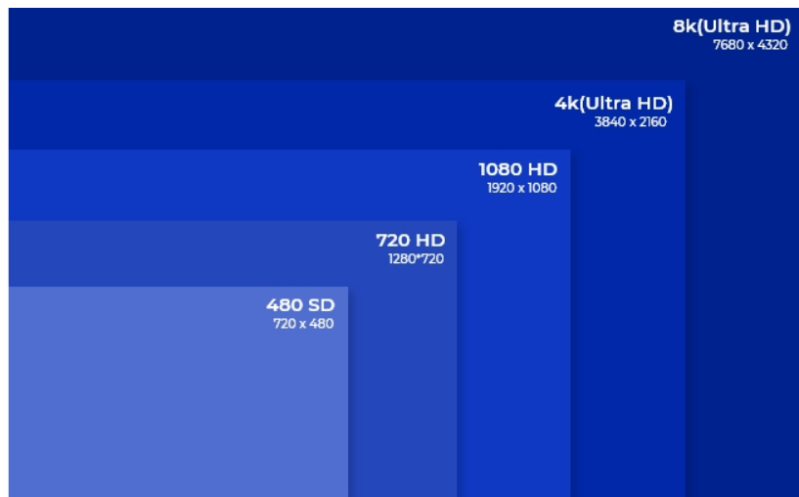

Pixelation determines resolution and how clear and precise your avatar comes across, along with the other imagery. There are various categories of resolution based on specific definitions of pixelation. In the chart below, sourced from reolinkcom, you can see the evolution of resolution based on pixelation from 480 pixels and 720 pixels—both under the high def (HD) threshold---to Ultra High Definition (UHD) resolution.

For my work, I use either 1280 p (which is the first tier of HD) or 720 p, which is not considered high res, but is suitable for mobile phones. Also, the more high def your visuals are, the longer they take to download and the more computing capacity you need. If you have a basic laptop, downloading a 4000 UHD video may be beyond machine capacity and will take a day or more to download. UHD work requires UHD-level hardware and bandwidth. While heavy-level enterprise work at scale may require UHD, it is not needed for basic day-to-day work or academic operations.

Here’s the takeaway: You can use HeyGen for basic training needs, with the advantage of photo-to-avatar capabilities, and Synthesia for enterprise-wide training at scale. Regardless of which you choose, you can save A LOT of time (text-to-speech alone is a game-changer) and achieve the goal of helping those on the other side of the screen learn effectively.